- Blog

- Acid pro 7 recording

- Office 365 outlook download

- Game of thrones season 8 episode 1 length

- Pandigital scanner motor stopped working

- Vmware workstation download for windows 10

- Visualizer 3d 2-0 software download

- Leostar 125

- Kirby adventure wii iso download

- Octoplus lg tool cracked 19-7-5 torrent

- Sonia rule 34

- Mendeley export citation

- Minimum requirements adobe premiere 6-0

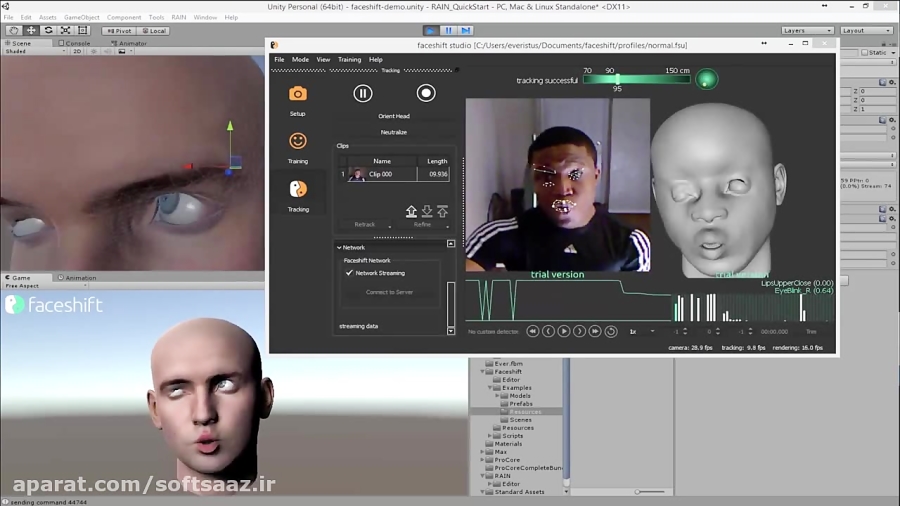

- Faceshift studio gone

- Eagetmail reference add to project

- Act raw to scale spreadsheet converter formula

- #FACESHIFT STUDIO GONE FULL#

- #FACESHIFT STUDIO GONE PRO#

- #FACESHIFT STUDIO GONE SOFTWARE#

- #FACESHIFT STUDIO GONE TRIAL#

They popped the lenses out of the glasses frame and used scotch tape to hold it on over the Kinect camera lenses and it worked better. I read back then that someone even tried using the lenses from a cheap set of drug store glasses to magnify the the Kinect lenses to do better closeups for face capture.

#FACESHIFT STUDIO GONE FULL#

From what I understood before, the Kinect does not have a shorter minimum distance like the Asus, so it is not well suited for face capture, more for wide angle full body views.

#FACESHIFT STUDIO GONE PRO#

They have the Asus Xtion Pro now for $170 where all other stores are asking over $200. I don’t have a sensor camera now, I only tried the Asus Xtion out and then returned it within 30 days to NewEgg. Same with the newer Faceshift version having improvements. wont get error such as setup of FaceShift Studio 2015 is corrupted or is missing files.

#FACESHIFT STUDIO GONE TRIAL#

I tried the trial version about two years ago or so with the Asus Xtion, not their newer model Xtion Pro which has some improvements as well as adding USB 3. Video Tutorial FaceShift Studio 2015 PC Installation Guide. I like Faceshift out of everything out there for markerless mocap. The freelance version is more expensive, but if it lands you paid jobs, it’ll be amortized very quickly. depth camera 4) have achieved great success in making films and games, such as Faceshift studio, which is a well-known facial motion capture software.

For toying around and experimenting, it’s very affordable and worth every penny. So provided you have a matching target rig, it allows for much more subtle acting.Īt last, with the aforementionned plugin, it’s now a blast to use in C4D while it used to be very convoluted due to C4D lack of good FBX support (no morphs were coming through, only bones).Īs for the price, I’m using the non-commercial version at the moment.

Then it tracks all those FACS morphs in real time to recreate every single deformation of your face (eyes, eyelids, cheeks, jaw, nose, brows, lips…). A side effect of that is that you can get a decent virtual clone of yourself in a pinch. It may not be obvious on my example, but Faceshift does much more than head, lip and eyebrow tracking with the kinect sdk.įirst it scans your face and retargets it to a custom avatar with the 42 usual FACS morph targets. You can learn more about Faceshift on their beautiful new website, faceshift.Brekel kinect and Faceshift are really in two different leagues. In other news, Faceshift is showcasing their non-pro studio version which features accurate facial tracking, streamlined UX, synchronized audio, flexible retargeting, and Intel RealSense support. According to Doug Griffin of Faceshift, this system works “as well or better” than their desktop version and will be used by professional motion capture studios. The markerless motion capture is made possible by tracking the depth of the face while simultaneously tracking every pixel individually and doing an optical flow over time. The small attached sensor shoots 60 frames per second of both depth and video.

The helmet is incredibly lightweight and features carbon arms that allow the user to fit the headset comfortably to their head.

#FACESHIFT STUDIO GONE SOFTWARE#

This enables the accurate capture of facial expressions during full body motion capture, which will allow content creators to capture life-like body and facial animations simultaneously, all while giving the actor the ability to move freely through space. This is being launched alongside their new Faceshift StudioPro software which boasts a killer set of features including wireless control, remote triggering, TimeCode support, batch processing, and realtime face tracking and targeting. Faceshift, an impressive markerless facial motion tracking and real-time character animation system has announced the release of a wireless facial tracking helmet, designed by Mocap Design, that can be used alongside third party full-body motion capture systems like Perception Neuron.